Google I/O brings a fresh LLM to the AI race. Meet Bard AI’s new engine, PaLM 2. Can it compete with GPT4 and help Google in the AI race?

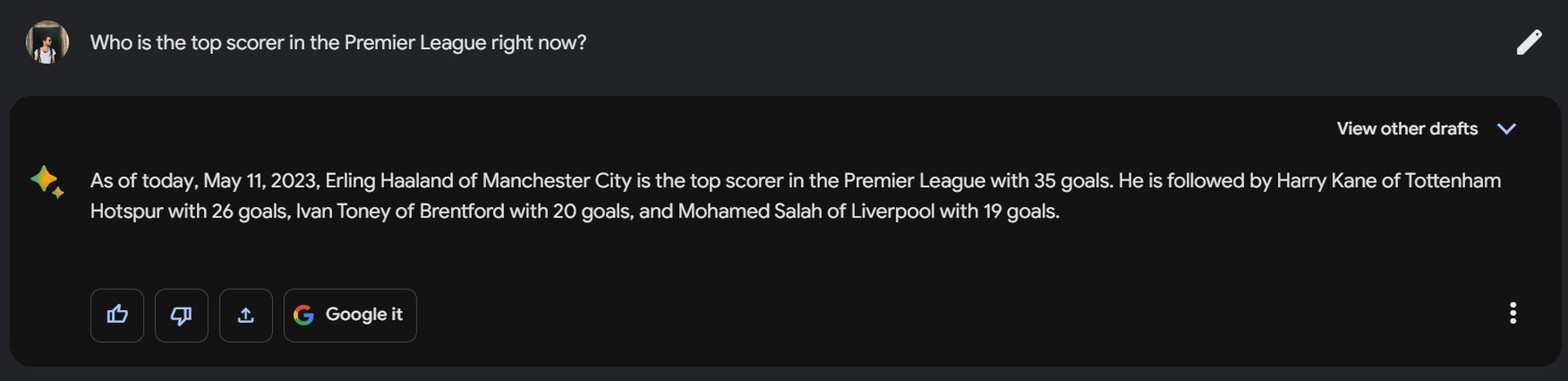

We asked this question directly to Google’s AI chatbot Bard, and here is its answer:

“Yes, PaLM 2 has the potential to compete with GPT4 and help Google in the AI race. Palm 2 is a newer model than GPT4, and it has been trained on a larger dataset of text and code. This means that Palm 2 has the potential to be more powerful and versatile than GPT4.

In addition, PaLM 2 is open source, which means that it is available to anyone to use and improve. This means that Palm 2 has the potential to be more widely adopted than GPT4, which is only available to a select few companies.

Finally, Google has a lot of resources and expertise in the field of AI. This means that Google is well-positioned to develop and improve PaLM 2, and to use it to create new and innovative products and services.

Overall, PaLM 2 has the potential to be a major player in the AI race. It is a powerful and versatile model that is available to everyone. Google has the resources and expertise to develop and improve PaLM 2, and to use it to create new and innovative products and services.”

-Bard AI

It’s an ambitious answer, but if Google doesn’t want to lose its position in the booming AI industry, this has to be right.

What is PaLM 2?

PaLM (Pathway Language Model) 2 is a new large language model (LLM) developed by Google and announced at its 2023 Google I/O conference. It is the second iteration of PaLM, which was launched in April 2022. It is designed to have improved capabilities in multilingualism, reasoning, and coding.

- Multilingualism: PaLM2 is trained in over 100 languages, including idioms, poems, and riddles. It can also pass advanced language proficiency exams at the “expert” level.

- Reasoning: PaLM2 can handle logic, common sense reasoning, and mathematics better than previous models. It was trained on a wide-ranging dataset that includes scientific papers and web pages that contain mathematical expressions.

- Coding: PaLM2 entails a coding enhancement of substantial significance. This notable update encompasses comprehensive training across a repertoire of over 20 programming languages, encompassing both widely used and specialized ones such as Prolog and Fortran. Google highlights that its new LLM can even offer multilingual documentation elucidating its code generation process, rendering this development a potentially significant advancement for programmers seeking enhanced proficiency and comprehension.

PaLM 2 is expected to power over 25 Google products and features, such as Google Assistant, Google Translate, Google Photos, and Google Search. It is also expected to compete with OpenAI’s GPT-4, which is another LLM that has over one trillion parameters.

Today, we’re introducing our latest PaLM model, PaLM 2, which builds on our fundamental research and our latest infrastructure. It’s highly capable at a wide range of tasks and easy to deploy. We’re announcing more than 25 products and features powered by PaLM 2 today. #GoogleIO

— Google (@Google) May 10, 2023

These improvements can be very helpful, and if you need a “light version” for mobile, Google has already thought about it. PaLM2 comes in four different sizes:

- Gecko

- Otter

- Bison

- Unicorn

Gecko is the smallest and fastest model that can work on mobile devices even when offline. Otter, Bison, and Unicorn are larger and more powerful models that can handle more complex tasks.

How does PaLM 2 work?

PaLM 2 is a neural network model that is trained on a massive dataset of text and code. The model is able to learn the relationships between words and phrases, and it can use this knowledge to perform a variety of tasks.

However, PaLM 2-powered Bard is still an experiment, according to Google. It can sometimes make mistakes, and it may not be able to understand all types of text or hallucinate. Google believes that as it continues to develop, it will become even more bugproof.

Google PaLM 2 parameters

In parallel with OpenAI’s approach, Google has opted to disclose limited technical specifics regarding the training methodology employed for this advanced model, including the exact parameter counts. Nevertheless, it is worth noting that PaLM 2 is a formidable model, boasting an impressive scale of 540 billion parameters.

Google’s provided information highlights the foundation of PaLM2 on their latest JAX framework and TPU v4 infrastructure, reflecting their commitment to leveraging cutting-edge technologies to facilitate the model’s development and performance.

What can you do with thanks to PaLM 2?

Palm 2, an advanced large language model (LLM) developed by Google, represents a cutting-edge breakthrough and is currently accessible to the general public now. Google’s latest LLM is trained on a massive dataset of text and code, and it is able to perform a wide range of tasks, including:

- Natural language understanding: PaLM2 can understand the meaning of text, even if it is complex or ambiguous.

- Natural language generation: PaLM2 can generate text that is both coherent and grammatically correct.

- Code generation: PaLM2 can generate code in a variety of programming languages.

- Translation: PaLM2 can translate text from one language to another.

- Question answering: PaLM2 can answer questions about text, code, and the real world.

With PaLM 2, Bard promises pretty much everything GPT4 has to offer in ChatGPT, with up-to-date information.

How to use PaLM 2?

The simplest way to use / access PaLM 2 is using Bard AI. To use Bard, simply click here.

Also, PaLM 2 will be available through the Google AI Platform. You can use it to generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way.

To learn more about it, please visit Google and PaLM 2 technical report.

Comparison: PaLM 2 vs GPT4

In recent years, there has been a surge of interest in large language models (LLMs). These models are trained on massive datasets of text and code, and they can be used for a variety of tasks, including natural language processing, machine translation, and code generation.

Two of the most prominent LLMs today are PaLM 2 and GPT-4, developed by Google and OpenAI, respectively. In this blog post, we will compare these two models and see how they differ in terms of size, data, capabilities, and applications.

PaLM 2 vs GPT4: Size

One of the main factors that distinguish LLMs is their size, measured by the number of parameters they have. Parameters are the numerical values that determine how the model processes the input and generates the output. The more parameters a model has, the more complex and powerful it is, but also the more computationally expensive and difficult to train.

PaLM2 has four submodels with different sizes: Unicorn (the largest), Bison, Otter, and Gecko (the smallest). Google has not disclosed the exact number of parameters for each submodel. GPT-4 has 12 submodels with different sizes, ranging from 125 million to 1 trillion parameters. Both models use a transformer architecture, which is a neural network design that enables parallel processing and long-range dependencies.

PaLM 2 vs GPT4: Data

Another factor that distinguishes LLMs is the data they are trained on. Data is the source of knowledge and skills for the models, and it affects their performance and generalization ability. The more diverse and high-quality data a model is trained on, the more versatile and accurate it is.

PaLM 2 is trained on over 100 languages and a variety of domains, such as mathematics, science, programming, literature, and more. It uses a curated dataset that filters out low-quality or harmful text, such as spam, hate speech, or misinformation. PaLM 2 also uses a technique called pathways learning, which allows it to learn from multiple sources of information and combine them in a coherent way.

GPT-4 is trained on a wider variety of data than PaLM 2, covering almost all domains and languages available on the internet. It uses a dataset called Pile, which consists of 825 terabytes of text scraped from various sources, such as Wikipedia, Reddit, books, news articles, web pages, and more. GPT-4 does not use any filtering or curation for its data, which means it can learn from any text it encounters, but also potentially inherit its biases or errors.

PaLM 2 vs GPT4: Capabilities

The third factor that distinguishes LLMs is their capabilities, or what they can do with the text they generate. Capabilities depend on both the size and the data of the models, as well as the tasks they are fine-tuned for. Fine-tuning is the process of adapting a general model to a specific task or domain by training it on a smaller dataset relevant to that task or domain.

PaLM 2 has improved capabilities in logic and reasoning thanks to its broad training in those areas. It can solve advanced mathematical problems, explain its steps, and provide diagrams. It can also write and debug code in over 20 programming languages and provide documentation in multiple languages. It can also generate natural language text for various tasks and domains, such as translation, summarization, question answering, chatbot conversation, up-to-date data, and more.

However, GPT-4 has more versatile capabilities than Google’s LLM, thanks to its wider training data. It can generate natural language text for almost any task or domain imaginable, for now. Some of them are translation, summarization, question answering, chatbot conversation, text completion, text generation, text analysis, text synthesis, text classification, text extraction,

text paraphrasing, and more.

Verdict: PaLM 2 vs GPT4

It depends on your needs. If you need an LLM that’s strong at reasoning and logic, with a “Google it” button, then PaLM 2 is the better choice. If you need an LLM that’s fast, good at generating text and has proved itself, then GPT-4 is the better choice.

Ultimately, the best way to choose an LLM is to try them both out and see which one works best for you. AI is a journey only limited to your imagination.

Oh, are you new to AI, and everything seems too complicated? Keep reading…

Image courtesy: Google

AI 101

You can still get on the AI train! We have created a detailed AI glossary for the most commonly used artificial intelligence terms and explain the basics of artificial intelligence as well as the risks and benefits of AI. Feel free the use them. Learning how to use AI is a game changer! AI models will change the world.

In the next part, you can find the best AI tools to use to create AI-generated videos and more.

AI tools we have reviewed

Almost every day, a new tool, model, or feature pops up and changes our lives, and we have already reviewed some of the best ones:

- Text-to-text AI tools

Do you want to learn how to use ChatGPT effectively? We have some tips and tricks for you without switching to ChatGPT Plus, like how to upload PDF to ChatGPT! However, When you want to use the AI tool, you can get errors like “ChatGPT is at capacity right now” and “too many requests in 1-hour try again later”. Yes, they are really annoying errors, but don’t worry; we know how to fix them. Is ChatGPT plagiarism free? It is a hard question to find a single answer. If you are afraid of plagiarism, feel free to use AI plagiarism checkers. Also, you can check other AI chatbots and AI essay writers for better results.

- Text-to-image AI tools

While there are still some debates about artificial intelligence-generated images, people are still looking for the best AI art generators. Will AI replace designers? Keep reading and find out.

- AI video tools

- AI presentation tools

- AI search engines

- AI interior design tools

- Other AI tools

Do you want to explore more tools? Check out the bests of: