Our three part Business Intelligence series has looked at the key developments in BI from the 1960’s all the way to the late 1990’s.

In the first edition, we focused on the way data storage changed from hierarchical database management systems (DBMS), like IBM’s IMS in the 60’s, to network DMBS’s and then to relational database management systems (RDMBS) in the late 70’s.

The second part of the series investigated the technological advancements through the 80’s and 90’s, predominantly mapping the evolution from mainframes to personal computers, DBMS’s to RDBM’s, and the emergence of new methods and tools like Data Warehousing, Extract Transform Load (ETL), and Online Analytical Processing (OLAP).

In this edition, we will take a brief look at how BI transitioned from a tool based, IT-centric activity, to one that is now accessible to technical and non-technical users alike.

The transformation of BI 1.0.

BI 1.0 refers to an era of BI that existed through the late 1990’s and early 2000’s. With the advent and development of data warehousing, SQL, ETL and OLAP, data was consolidated into a unified system and queries could be written to extract data from many  tables at once, ultimately helping companies access and store their data more effectively.

tables at once, ultimately helping companies access and store their data more effectively.

At its core, BI 1.0. could be distilled into two components: data and reports, or aggregation and presentation. As Neil Raden, Principal Analyst at HiredBrains Research commented, “most of the effort in BI…[was] focused on data integration, data quality, data cleansing, data warehouse, data mart, data modelling, data governance, data stewardship.”

However, within this period, the major problem with BI projects was that they were still owned by IT departments, data was siloed, and reports often took extended periods of time to be delivered to management. BI solutions were predominantly designed for an analytics-trained minority and those who were already capable of understanding data models.

BI 2.0 and the Influence of Web 2.0: Mid-2000’s

The mid-late 2000’s marked a significant step forward for BI as it entered its acclaimed 2.0 phase; it went far beyond simple data and reporting by integrating near real-time processing, collaboration, self-service, discoverability, as well as offline and online access.

Whereas BI 1.0 centered mostly around the refinement of different tools – the aforementioned data warehouse, OLAP, and ETL technologies – BI 2.0 focused mostly on using the connectivity of the Web to create a BI environment that would encourage access, flexibility and getting the right data to the right people.

Many of these changes were influenced by the direction that the Web began to take in the early 2000’s (often dubbed “Web 2.0”) with social networking and web applications. One significant example was the arrival of platforms like Facebook, Twitter, and even Google, where the consumer became an important source of critique – anyone could exchange opinions on widely accessible sites, as well as gain instant information on competitors.

Businesses in the mid-2000’s therefore required access to immense amounts of real-time information in order to, among other things, track customers’ reactions to their products, what their competitors were offering, and, with the advancement of mobile and tablet technologies, the best interfaces on which to approach their consumers. In other words, the “new” Web environment demanded a simultaneous reconstitution of BI technologies that emphasized agility, dynamism, and immediacy.

BI 2.5 and the Democratization of Data: 2000’s – Current

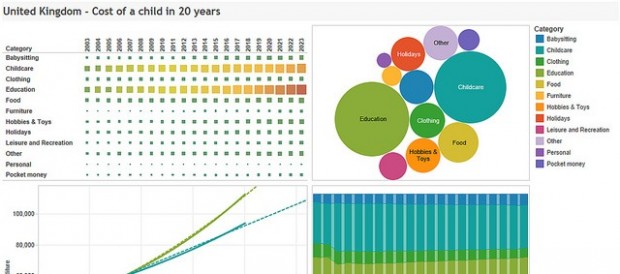

After this explosion of data throughout the 2000’s, businesses in our current environment now require visualization tools – interactive dashboards, bar graphs, animations – to effectively analyse the information coming from inside and outside of the organization. BI is becoming jointly governed by IT and business users themselves, and is aimed at empowering the ‘Data Explorer’ through content delivery and creation. The emergence of visualisation tools and other techniques means that BI uptake across the organisation is rising, essentially empowering business users with the ability to independently explore their data.

Everyone from huge IT companies like Oracle, IBM, SAP, SAS, Microsoft, as well as other

companies like Tableau, Birst, Qlikview, Tibco Jaspersoft, SiSense (the list continues), are all competing to make data easier to store, more accessible across devices, and processable at a speed like never before.

As such, the battle between BI companies today is to provide speed, affordability, and high capacity storage. With mobile technology and PC’s generating such incredible amounts of data – estimated at 2.5 quintillion bytes a day — companies are no longer looking for access alone. Rather, the data has to be accessed in breakneck speeds across all devices, instantly analysable and stored in a cost effective manner.

It is no surprise, therefore, that the BI market is expected to reach $20.8 billion by 2018, at an estimated CAGR of 8.3%, of which $4 billion is expected to come from cloud-based BI. The same goes for data visualisation, with forecasts suggesting the market will grow at a CAGR of 9.21% to reach $6.40 billion by 2019.

Whether BI is set to enter yet another phase – BI 3.0? – and what it will look like is as yet undetermined. But as Brian Gentile suggests, the fierce competition among BI vendors may already have reached its tipping point:

“We joke about it inside TIBCO Jaspersoft ‘here’s the new competitor of the week’. Everyone apparently thinks that they can do analytics because that’s what it looks like. While this will clearly generate some good ideas, not all these companies are going to make it. Many of them are going to fail, or be acquired, and so on along the path.”

With this in mind, the History of Business Intelligence series has come to a conclusion. However, we will now look at dispelling the myths around BI, compare vendors against one another and offer guidance as to which technologies are right for your specific business needs.

Furhaad worked as a researcher/writer for The Times of London and is a regular contributor for the Huffington Post. He studied philosophy on a dual programme with the University of York (U.K.) and Columbia University (U.S.) He is a native of London, United Kingdom.

Furhaad worked as a researcher/writer for The Times of London and is a regular contributor for the Huffington Post. He studied philosophy on a dual programme with the University of York (U.K.) and Columbia University (U.S.) He is a native of London, United Kingdom.

Email: [email protected]

Interested in more content like this? Sign up to our newsletter, and you wont miss a thing!

[mc4wp_form]

(Image Credit: M.A. Cabrera Luengo)

PREVIOUS ENTRIES:

The History of BI: The 1960′s and 70′s

The History of BI: The 1960′s and 70′s

This is the first edition to a three part series and gives a brief overview of the history of business intelligence. Starting in the 1960’s and 70’s, the article looks at the advancements made in data storage, database management systems, and companies that were pioneering BI from the early stages.

The History of BI: The 1960′s and 70′s

The History of BI: The 1960′s and 70′s

The second edition of our business intelligence series takes a deeper look at the transition from DBMS’s to RDBM’s, and the emergence of Data Warehousing, ETL, and OLAP. The 1980’s and 90’s were revolutionary in many aspects for BI, and ultimately transformed the way businesses extracted value from their data.

” Rather, the data has to be accessed in breakneck speeds across all

devices, instantly analysable and stored in a cost effective manner.”

What’s the point of collecting and storing all that data if people can’t get at it when they need? And sometimes that means accessing it via mobile devices. BI providers need to be fast, secure, and always looking for ways to improve the user experience.