In this post, we’re going to get our hands dirty with code- but before we do, let me introduce the example problems we’re going to solve today.

1) Predicting House Prices

We want to predict the values of particular houses, based on the square footage.

2)Predicting Which TV Show Will Have More Viewers Next Week

The Flash and Arrow are my favourite TV shows. I want to find which show will have more viewers in the coming week.

3) Replacing Missing Values in a Dataset

Often we have to work with datasets with missing values; this is less of a hands-on walkthrough, but I’ll talk you through how you might go about replacing these values with linear regression.

So, Let’s Dive Into the Coding (Nearly)

Before we do, it would be a good idea to install the packages from my previous post, Python Packages for Data Mining.

1) Predicting House Prices

We have the following dataset:

| Entry No. | Square_Feet | Price |

|---|---|---|

| 1 | 150 | 6450 |

| 2 | 200 | 7450 |

| 3 | 250 | 8450 |

| 4 | 300 | 9450 |

| 5 | 350 | 11450 |

| 6 | 400 | 15450 |

| 7 | 600 | 18450 |

Steps to Follow:

- With linear regression, we know that we have to find a linearity within the data so we can get θ0 and θ1

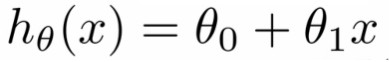

- Our hypothesis equation looks like this:

- hθ(x) is the value price (which we are going to predicate) for particular square_feet (means price is a linear function of square_feet)

- θ0 is a constant

- θ1 is the regression coefficient

And now, to coding:

Step 1.

- Open your favourite text editor, and name a file predict_house_price.py.

- We’re going to use the following packages in our programme, so copy them into your predict_house_price.py file.

[code language=”css”]# Required Packages

import matplotlib.pyplot as plt

import numpy as np

import pandas as pd

from sklearn import datasets, linear_model [/code]

- Just run your code once. If your program is error-free, then most of the work on Step 1 is done. If you face any errors , this means you missed some packages so head back to the packages page.

- Install all the packages in that blog post and run your code once again . This time hopefully you won’t face any problems.

- Now that you’re programme is error-free, we can proceed to…

Step 2.

- I stored our data set in to a .csv file, named input_data.csv

- So let’s write a function to get our data into X values ( square_feet) Y values (Price)

[code language=”css”]# Function to get data

def get_data(file_name):

data = pd.read_csv(file_name)

X_parameter = []

Y_parameter = []

for single_square_feet ,single_price_value in zip(data[‘square_feet’],data[‘price’]):

X_parameter.append([float(single_square_feet)])

Y_parameter.append(float(single_price_value))

return X_parameter,Y_parameter[/code]

Lines 3: Reading .csv data into Pandas dataframe.

Lines 6-9: Converting Pandas dataframe data into X_parameter and Y_parameter data, and returning them.

So, let’s print out our X_parameters and Y_parameters:

[code language=”css”]X,Y = get_data(‘input_data.csv’)

print X

print Y[/code]

Script Output:

[[150.0], [200.0], [250.0], [300.0], [350.0], [400.0], [600.0]]

[6450.0, 7450.0, 8450.0, 9450.0, 11450.0, 15450.0, 18450.0]

[Finished in 0.7s]

Step 3

Now let’s fit our X_parameters and Y_parameters to Linear Regression model. We’re gonna write a function which we’ll take X_parameters ,Y_parameter and the value you gonna predict as input and return the θ0 ,θ1 and predicted value.

[code language=”css”]# Function for Fitting our data to Linear model

def linear_model_main(X_parameters,Y_parameters,predict_value):

# Create linear regression object

regr = linear_model.LinearRegression()

regr.fit(X_parameters, Y_parameters)

predict_outcome = regr.predict(predict_value)

predictions = {}

predictions[‘intercept’] = regr.intercept_

predictions[‘coefficient’] = regr.coef_

predictions[‘predicted_value’] = predict_outcome

return predictions [/code]

Lines 5-6: First, we’re creating an linear model and the training it with our X_parameters and Y_parameters.

Lines 8-12: We’re creating one dictionary with name predictions and storing θ0 ,θ1 and predicted values, and returning predictions dictionary as an output.

So let’s call our function with predicting value as 700

[code language=”css”] X,Y = get_data(‘input_data.csv’)

predictvalue = 700

result = linear_model_main(X,Y,predictvalue)

print "Intercept value " , result[‘intercept’]

print "coefficient" , result[‘coefficient’]

print "Predicted value: ",result[‘predicted_value’] [/code]

Script output: Intercept value 1771.80851064

coefficient [ 28.77659574]

Predicted value: [ 21915.42553191]

[Finished in 0.7s]

Here, the Intercept value is just the θ0 value and coefficient value is the θ1 value.

We got the predicted value as 21915.4255- which means we’ve done our job of predicting the house price!

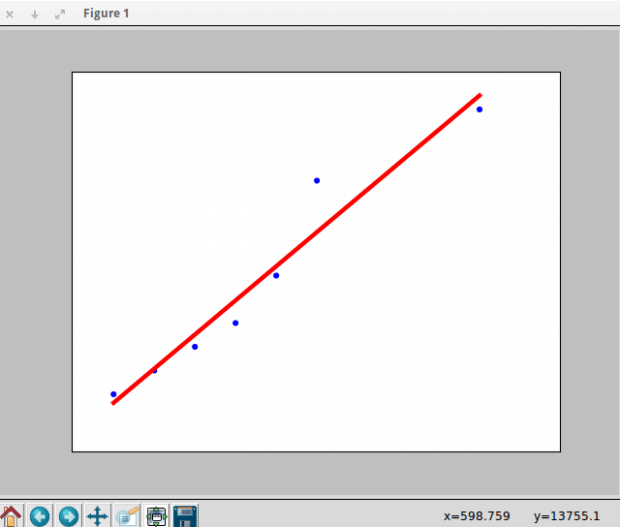

For checking purposes, we have to see how our data fits to linear regression. So we have to write a function which takes X_parameters and Y_parameters as input and show the linear line fitting for our data.

[code language=”css”]# Function to show the resutls of linear fit model

def show_linear_line(X_parameters,Y_parameters):

# Create linear regression object

regr = linear_model.LinearRegression()

regr.fit(X_parameters, Y_parameters)

plt.scatter(X_parameters,Y_parameters,color=’blue’)

plt.plot(X_parameters,regr.predict(X_parameters),color=’red’,linewidth=4)

plt.xticks(())

plt.yticks(())

plt.show() [/code]

So let’s call our show_linear_line function:

[code language=”css”] show_linear_line(X,Y)[/code]

2)Predicting Which TV Show Will Have More Viewers Next Week

The Flash is an American television series developed by writer/producers Greg Berlanti, Andrew Kreisberg and Geoff Johns, airing on The CW. It is based on the DC Comics character Flash (Barry Allen), a costumed superhero crime-fighter with the power to move at superhuman speeds, who was created by Robert Kanigher, John Broome andCarmine Infantino. It is a spin-off from Arrow, existing in the same universe. The pilot for the series was written by Berlanti, Kreisberg and Johns, and directed by David Nutter. The series premiered in North America on October 7, 2014, where the pilot became the most watched telecast for The CW.

Arrow is an American television series developed by writer/producers Greg Berlanti, Marc Guggenheim, and Andrew Kreisberg. It is based on the DC Comics characterGreen Arrow, a costumed crime-fighter created by Mort Weisinger and George Papp. It premiered in North America on The CW on October 10, 2012, with international broadcasting taking place in late 2012. Primarily filmed in Vancouver, British Columbia, Canada, the series follows billionaire playboy Oliver Queen, portrayed by Stephen Amell, who, after five years of being stranded on a hostile island, returns home to fight crime and corruption as a secret vigilante whose weapon of choice is a bow and arrow. Unlike in the comic books, Queen does not initially go by the alias “Green Arrow”.

As both of these shows are tied for the title of my favourite TV show, I’m always interested to know which one is more popular with other people- and which one will ultimately win the ratings war.

So, lets write a program which predicts which TV Show will have more viewers.

We need a dataset which shows viewers for each episode. Luckly, I got this data from Wikipedia and prepared a .csv file. It’s looks like this.

| FLASH_EPISODE | FLASH_US_VIEWERS | ARROW_EPISODE | ARROW_US_VIEWERS |

|---|---|---|---|

| 1 | 4.83 | 1 | 2.84 |

| 2 | 4.27 | 2 | 2.32 |

| 3 | 3.59 | 3 | 2.55 |

| 4 | 3.53 | 4 | 2.49 |

| 5 | 3.46 | 5 | 2.73 |

| 6 | 3.73 | 6 | 2.6 |

| 7 | 3.47 | 7 | 2.64 |

| 8 | 4.34 | 8 | 3.92 |

| 9 | 4.66 | 9 | 3.06 |

(Viewers are in millions)

Steps to solving this problem:

- First we have to convert our data to X_parameters and Y_parameters, but here we have two X_parameters and Y_parameters. So, lets’s name them as flash_x_parameter, flash_y_parameter, arrow_x_parameter , arrow_y_parameter.

- Then we have to fit our data to two different linear regression models- first for Flash, and the other for Arrow.

- Then we have to predict the number of viewers for next episode for both of the TV shows.

- Then we can compare the results and we can guess which show will have more viewers.

Step 1

Let’s import our packages:

[code language=”css”]# Required Packages

import csv

import sys

import matplotlib.pyplot as plt

import numpy as np

import pandas as pd

from sklearn import datasets, linear_model [/code]

Step 2

Let’s write a function which will take our data set as input and returns flash_x_parameter,flash_y_parameter,arrow_x_parameter ,arrow_y_parameter values.

[code language=”css”]# Function to get data

def get_data(file_name):

data = pd.read_csv(file_name)

flash_x_parameter = []

flash_y_parameter = []

arrow_x_parameter = []

arrow_y_parameter = []

for x1,y1,x2,y2 in zip(data[‘flash_episode_number’],data[‘flash_us_viewers’],data[‘arrow_episode_number’],data[‘arrow_us_viewers’]):

flash_x_parameter.append([float(x1)])

flash_y_parameter.append(float(y1))

arrow_x_parameter.append([float(x2)])

arrow_y_parameter.append(float(y2))

return flash_x_parameter,flash_y_parameter,arrow_x_parameter,arrow_y_parameter [/code]

Now we have our parameters, let’s write a function which will take these above parameters as input and gives an output that will predict which show will have more views.

[code language=”css”]# Function to know which Tv show will have more viewers

def more_viewers(x1,y1,x2,y2):

regr1 = linear_model.LinearRegression()

regr1.fit(x1, y1)

predicted_value1 = regr1.predict(9)

print predicted_value1

regr2 = linear_model.LinearRegression()

regr2.fit(x2, y2)

predicted_value2 = regr2.predict(9)

#print predicted_value1

#print predicted_value2

if predicted_value1 > predicted_value2:

print "The Flash Tv Show will have more viewers for next week"

else:

print "Arrow Tv Show will have more viewers for next week" [/code]

Let’s write every thing in one file. Open your editor, and name it as prediction.py and copy this total code into prediction.py file.

[code language=”css”]# Required Packages

import csv

import sys

import matplotlib.pyplot as plt

import numpy as np

import pandas as pd

from sklearn import datasets, linear_model

# Function to get data

def get_data(file_name):

data = pd.read_csv(file_name)

flash_x_parameter = []

flash_y_parameter = []

arrow_x_parameter = []

arrow_y_parameter = []

for x1,y1,x2,y2 in zip(data[‘flash_episode_number’],data[‘flash_us_viewers’],data[‘arrow_episode_number’],data[‘arrow_us_viewers’]):

flash_x_parameter.append([float(x1)])

flash_y_parameter.append(float(y1))

arrow_x_parameter.append([float(x2)])

arrow_y_parameter.append(float(y2))

return flash_x_parameter,flash_y_parameter,arrow_x_parameter,arrow_y_parameter

# Function to know which Tv show will have more viewers

def more_viewers(x1,y1,x2,y2):

regr1 = linear_model.LinearRegression()

regr1.fit(x1, y1)

predicted_value1 = regr1.predict(9)

print predicted_value1

regr2 = linear_model.LinearRegression()

regr2.fit(x2, y2)

predicted_value2 = regr2.predict(9)

#print predicted_value1

#print predicted_value2

if predicted_value1 > predicted_value2:

print "The Flash Tv Show will have more viewers for next week"

else:

print "Arrow Tv Show will have more viewers for next week"

x1,y1,x2,y2 = get_data(‘input_data.csv’)

#print x1,y1,x2,y2

more_viewers(x1,y1,x2,y2) [/code]

You may be able to guess which show will have viewers from the data set- but run this programme to check it and see.

3) Replacing Missing Values in a Dataset

Sometimes, we have a situation where we have to do analysis on data which consists of missing values. Some people will remove these missing values and continue analysis, and some people replace them with min, max or mean value. Mean value is the best out of the three, but can use linear regression to replace those missing value very effectively.

This approach goes some thing like this.

First we have find in which column we’re gonna replace missing values and find which data in the other collumns the missing data depends on. Consider missing values column as Y_parameters and consider the columns on which this missing values more depend as X_parameters, and fit this data to Linear regression model. Now predict the missing values in missing values column by consider the columns on which this missing values column more depends.

Once this process is completed, we will get data without any missing values, leaving us free to analyse this data.

For practice, I’ll leave this problem to you so please kindly get some missing values data from online and solve this problem. Leave your comments once you’ve completed. I’d love to see your results.

Small personal note:

I want to share my personal experience with data mining. I remember in my introductory data mining classes, the instructor starts slow and explains some interesting areas where we can apply data mining and some very basic concepts. Then suddenly, the difficulty level would sky rocket. This made some of my classmates feel extremely frustrated and intimidated by the course, and ultimately killed their interest in data mining. So I want to avoid doing this in my blog posts. I want to make thing more easygoing; hence why I tried to use interesting examples, to make my readers more comfortable learning without getting bored or intimidated.

Thanks for reading this far- please leave your questions or suggestions in the comment box, and I’d be delighted to get back to you.

Originally posted on DataAspirant.

Featured image courtesy of Wikia– this is promotional material for Arrow, and so is copyright of The CW Network.