Sander Dieleman, a Spotify intern and Ph.D. student has published a lengthy report on his grand plans to revolutionise Spotify’s recommender system. In short, he’s planning to use deep learning to recommend you songs that actually sound like what you listen to, meaning it’s not just the most popular songs that will rise to the top of your recommended playlist.

Historically, Spotify has used collaborative filtering to recommend songs from its immense musical library. As the blog post states, “The idea of collaborative filtering is to determine the users’ preferences from historical usage data. For example if two users listen to largely the same set of songs, their tastes are probably similar.” This technique hinges on consumption and behaviour patterns, rather than the content itself, and is the principle behind many book, movie and music recommender systems.

“Unfortunately,” the blog post states, their content-agnostic approach “turns out to be their biggest flaw. Because of their reliance on usage data, popular items will be much easier to recommend than unpopular items, as there is more usage data available for them. This is usually the opposite of what we want. For the same reason, the recommendations can often be rather boring and predictable.”

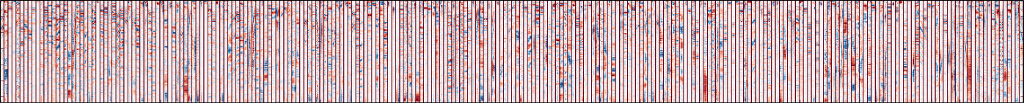

Dieleman’s solution was a deep learning system which actually analysed the content of the songs. He used 30-second clips of 500,000 songs to train the algorithm and another 500,000 to test it. Here’s a visualisation of one neural network layer. As Dieleman explains: “Negative values are red, positive values are blue and white is zero. Note that each filter is only four frames wide. The individual filters are separated by dark red vertical lines.”

Each filter seems to pick up a particular characteristic, such as vocal thirds or bass drum. The report contains sample playlists based on the 256 low-level filters in the neural network, such as Filter 14- “vibrato singing”, or Filter 242- “ambience” (which is particularly excellent, and included below).

Dieleman discovered that “at the topmost fully-connected layer of the network, just before the output layer, the learned filters turn out to be very selective for certain subgenres”. The network could classify songs into subgenres such as gospel, chinese pop and deep house, sample playlists for which are included in the post.

The algorithm can used to filter outliers with little aural similarity, and filter out intos, outros and cover songs. But the key use is making recommended playlists smarter, filled with obscure or upcoming music you could fall in love with, but previously never knew existed.

News source: Gigaom

(Images: Spotify)

Interested in more content like this? Sign up to our newsletter, and you wont miss a thing!

[mc4wp_form]