When attending an Open Data Conference in Germany these days, one might wonder whether the participants have discovered a new type of species. A mysterious species that knows what to do with data. An intelligent species that is able to find treasures on confusing websites and to understand government speech full of bulky words. A species that somehow miraculously takes the CSV and WMS files thrown at it and turns them into beautiful new applications for the benefit of all.

Let me summarise some of the reasons why the Open Data discussion in Germany is missing out on major opportunities.

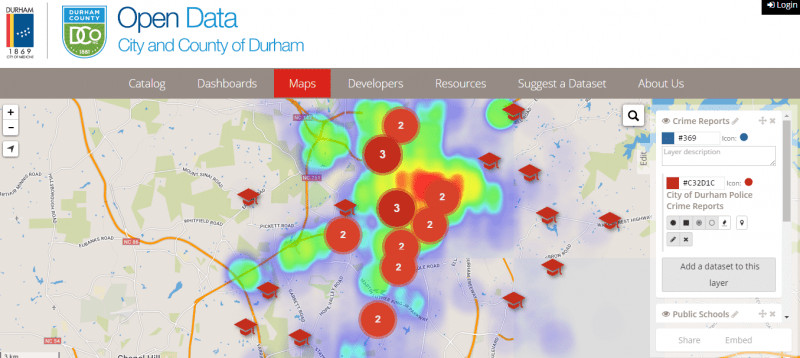

First of all, I admit that I am biased. I am biased because before I immersed myself deeper into the world of Open Data, I stumbled across a French company called OpenDataSoft. A company that developed a platform because it believes that anyone should be able to publish and reuse data, no matter his or her level of technical expertise. Before I had even seen one single German Open Data portal, I was already familiar with the portals of the Paris region Ile de France or the US-city and county Durham, N.C. I thought this was the standard and also applied to Germany. Boy, was I wrong…

There are over 40 open data portals in Germany – including municipalities, regions and other organisations. Around 30 cities publish open data on some sort of portal, meaning there is a dedicated page on which users find structured data ready to download. This is a good start, for sure, until you realize that this represents merely 40% of all cities with more than 100k inhabitants.

However, what’s even more worrying than the quantity is the quality of some of these portals, not to mention the data they publish. Do not get me wrong: there are amazing Open Data projects of cities like Bonn, Moers and many others. Countless committed pioneers have done a remarkable job in building an ecosystem and pushing the open data topic forward in Germany.

When it comes to a lot of other portals, however, one cannot help but wonder whether the publishers confuse “open data” with “open information.” Just to recap: Open Data refers to machine-readable, structured data. Hence, publishing information in PDF or Word documents does NOT mean Open Data. Publishing largely aggregated data in excel files with multiple sheets does NOT equate to Open Data. Lastly, even the many geo portals providing WMS files without their accompanying WFS files that contain the data points CANNOT be considered Open Data. This would be as if someone took a picture of a map in their grandfather’s atlas and then uploaded it somewhere. How are developers supposed to build anything on top of that?

Let’s imagine a typical Open Data use case: Alex the developer wants to build a transportation app for her mid-sized hometown in Germany. Today, the process to get the data she needs to build such a service might look like this:

- First, she will have to navigate her way through a myriad of bulky sites to find a downloadable file. It might be called something like “Public transp. network cat. Bus_UPDATED_122015” (us Germans love long titles).

- She may assume that this file contains the bus timetables for the city centre and rejoices as hardly any public transport providers publish this kind of information as Open Data. She then:

- May have to register and outline the reason for her request, or

- May be totally in luck and find a CSV file – yeah.

- She will then have to download this file

- Open it as an excel

- Be confused by strange column titles such as “ID_Str” or “Abkr._ref”

- Go back to the original page to download an accompanying pdf that describes the abbreviations used in the CSV file…

- …and finally she will realise that instead of the timetable the file contains only the location of bus stops (as addresses, not as geopoints).

- Still motivated?

Even if she finds the information she is looking for, she will first have to perform some data cleaning before transforming the file into the needed format. All this before she can combine it with additional data.

Given this lengthy process it is not surprising that many communities wonder “Who is supposed to do anything with our data?” And they are right! One can hardly imagine that anyone would be willing to go through this process voluntarily.

Sadly, such a complicated approach towards Open Data results in frustration on both sides: government employees in charge of the program do not see the results they were hoping for, and end-users continue to struggle to use the published data.

In addition, Open Data remains something “abstract.” Something that is hard to grasp by “the average citizen” (and thus whose funding is difficult to justify).

As a country that has long been hesitating to pursue an Open Data strategy more seriously, Germany is representative of many other countries facing the same challenges in opening up data. At the same time, countries like Germany have the opportunity to benefit from the best practices seen in more advanced Open Data regions.

If we want Open Data to become a basic commodity, we first need to rethink the process of opening up data. Of course, there are many factors that impact this process (most notably those relating to political and legal aspects), but even in government agencies with a well advanced Open Data mindset, there remains a barrier that relates entirely to the workflow itself. Today, it is usually the responsibility of one sole manager to upload datasets forwarded to him or her via email. Often, this person has a wide range of other duties as well and overall may have little insights into the type of data that is available within the agency. This not only significantly slows down the process of opening up data but more importantly: usually it is the dataset creator who is best suited to ensure the quality of the dataset. Thus, we need to implement tools and processes that enable anyone – regardless of their technical expertise – to process and publish Open Data.

Second, data is only truly open when anyone can use it. Today, German Open Data portals exclusively address developers. This is a very small circle. Moreover, this very particular group must have a very precise idea of what they are looking for. When it comes to innovation, however, the greatest breakthroughs are made by accident. Open Data tools must, as such, be conducive towards this type of open discovery. Graphs and maps are not just some “Nice to have”-features. Visualisations provide insights. They are essential in order for the “average citizen” – and government employee – to process and to better comprehend data. Open Data should enable everyone to test assumptions in an easy and intuitive way. We should aspire to a world in which we truly make use of the innovative potential inside of every single community member if only given the right tools.

Finally, as unbelievable as it may sound: in 2016, developers still have to regularly check German Open Data portals for any updates to the datasets published on them. Updates to Open Data in real-time thus remains a dream. But even on a more basic level, beyond some catalogue APIs developers will have a hard time finding APIs to the datasets themselves. However, to foster innovation data must be ready for reuse. No extracting, transforming, and loading. Just ready-to go data available upon which to build the next application. Thus, datasets need to be automatically transformed into APIs. And not just any APIs. These APIs need to be able to handle huge datasets with millions of data points which can be loaded in seconds. These APIs must be able to be filtered down to each data point. And these APIs need to be scalable and able to serve as the basis for real-time applications.

Of course, providing the right technology won’t solve all the challenges facing Open Data in Germany. There are many more questions to be answered relating to licenses and privacy rights, for example. However, if we want to truly exploit the benefits of Open Data, we need to make sure that when attending an Open Data conference in the future, anyone can contribute to the discussion.

Like this article? Subscribe to our weekly newsletter to never miss out!