Business intelligence, or BI as it is commonly referred to, has gained considerable popularity over the past decade. Although the term was first introduced in 1865, and picked up momentum in the mid-late 1980’s, it was only up until the early 1990’s that the phrase entered public discourse. Now it is widely deployed among business users across all industry verticals – including retail, ecommerce, healthcare, banking, hospitality, and even sports.

In this three-part series, we will look at the history of BI by decade – starting with 1960’s and 70’s, all the way through to the turn of the millenium – to analyze both how the term has evolved, and where it might be heading.

The History of Business Intelligence

Historically, information has been an unavoidable ingredient in the quest for advanced knowledge. Information lies at the backbone of almost every seminal innovation – scientific or otherwise — from Michael Faraday’s discovery of electricity and Charles Babbage’s mechanical computer, to the sequencing of the human genome. Fundamentally, information is about answers and answers, we have repeatedly learned, lead to insights.

Information, however, has many forms and applications. For business users, information is primarily found in data, and more specifically, in business data – which is comprised of sales, accounting, human resources, production, and more generally, people, products and places.

With this data, businesses can “yield significant information to provide historical, current, and predictive views of business operations” and improve performance considerably. This is, at it’s core, what business intelligence about. But before we define the term, knowing its history will help us understand the terms and processes involved in BI.

The 1960’s and 70’s

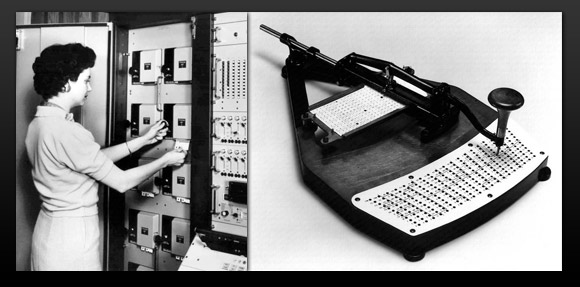

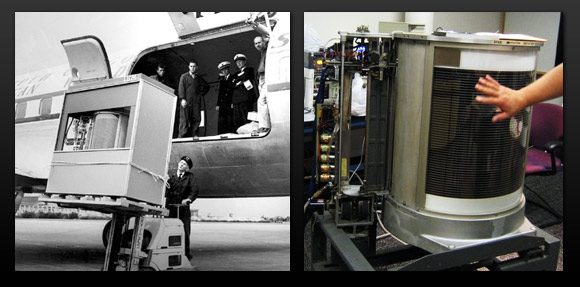

Before computers and digital storage were invented, business data would be kept in physical filing systems. However, after IBM invented the hard disk in 1956 (which at the time could store 5MB of data), and storage technologies such as the floppy disk became more widespread, traditional methods like punch cards came to an end. As computers arrived, business started using laserdisc’s, magnetic tape, and larger, more efficient hard disks to store their data.

arrived, business started using laserdisc’s, magnetic tape, and larger, more efficient hard disks to store their data.

Yet, while the storage of data advanced, companies realised that these devices were expensive, difficult to manage, and proved to be time consuming when extracting data. Above all, there was no centralised method, or technology, that could bring together all of this data. This is where hierarchical Database Management Systems (DBMS), like IBM’s IMS, started to pick up. This type of DBMS was based on binary trees, where data was arranged in a hierarchical tree structure of parent and two child records. The benefits were multifarious; there was “less redundant data, data independence, security and integrity, which all lead to efficient searches.” Further experiments with these systems, like the CODASYL group’s network DBMS (where each child record could have multiple parents, instead of simply one), paved the way for additional innovation in data organization.

While DBMS’s radically changed the way data was collected, stored and entered, access to this data remained difficult. It was only until the mid-1970’s that leading business intelligence vendors — like SAP, Siebal Systems, and JD Edwards — started offering business applications to companies so that they could analyse and access their data. Prior to this,

“End-Users had to wait for everything…the earlier tools designed for query and reporting were sold as do-it-yourself solutions, though the idea was fascinating the solutions at times did very little solving at all.” (source)

“End-Users had to wait for everything…the earlier tools designed for query and reporting were sold as do-it-yourself solutions, though the idea was fascinating the solutions at times did very little solving at all.” (source)

Business vendors like the aforementioned helped greatly with the automation of systems and the amount of data available. However, due to the lack of infrastructure for data exchange and unharmonious systems, the process of collection still remained a big challenge. As such, although access had improved, it remained difficult because data was entering from multiple sources and business applications and could only be accessed individually. As such, “this led to a one dimensional approach, and could only provide access in silos, ultimately resulting in fragmented data.” (source)

RDBMS & The Emergence of Data Warehouse

The real success of the 70’s was the emergence of relational database management systems (RDBMS). Edgar Codd, an IBM researcher at the time, saw the navigational model of CODASYL’s approach as problematic: users had to manually find their way through significant amounts of complexity to find the data they needed. In his paper, “A Relational Model of Data for Large Shared Data Banks,” Codd completely transformed the way databases were conceived from “…a simple means of organization,” to, “a tool for querying data to find relations hidden within.” In essence, Codd’s idea made it easier for people to enter and “grab” the data they required. As one article explains,

“All tables will be then linked by either one to one relationships, one to many, or many to many. When elements took space and were not useful, it was easy to remove them from the original table, and all the other “entries” in other tables linked to this record were removed.”

Although two RDMBS projects were developed and launched in the 70’s (R of IBM and INGRES from the University of California), it was only until the late 1980’s and early 1990’s that RDMBS’s really took off. Furthermore, the term “data warehouse” emerged in the 70’s and would ultimately change the way people, and specifically business intelligence, would operate.

This will be the focus of the next edition.

(Image Credit: Header, Flickr; Second Image, Pingdom; Third Image, Pingdom)

Furhaad worked as a researcher/writer for The Times of London and is a regular contributor for the Huffington Post. He studied philosophy on a dual programme with the University of York (U.K.) and Columbia University (U.S.) He is a native of London, United Kingdom.

Furhaad worked as a researcher/writer for The Times of London and is a regular contributor for the Huffington Post. He studied philosophy on a dual programme with the University of York (U.K.) and Columbia University (U.S.) He is a native of London, United Kingdom.

Email: [email protected]

Interested in more content like this? Sign up to our newsletter, and you wont miss a thing!

[mc4wp_form]